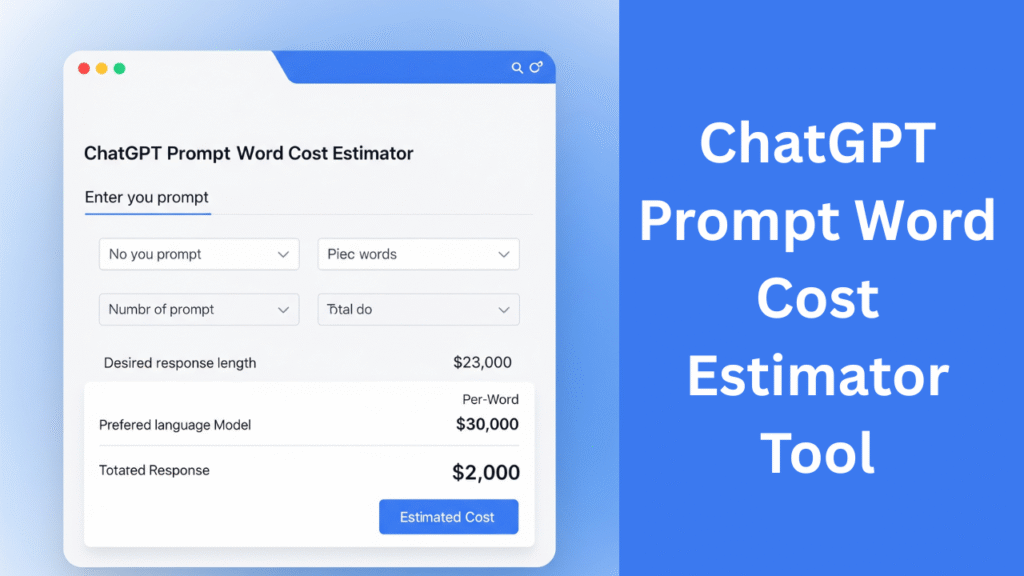

ChatGPT Prompt Cost Estimator

Calculate your AI expenses based on word count and discover optimization strategies

Estimate Your Prompt Cost

Understanding AI Costs

1 token ≈ 0.75 words. Longer prompts consume more tokens.

Advanced models like GPT-4 cost 30x more than GPT-3.5.

Most providers offer discounts for high-volume usage.

Clear, concise prompts reduce costs and improve results.

Optimizing Your ChatGPT Prompt Costs

As AI language models become increasingly integral to content creation, research, and development, understanding and managing costs has never been more important. This guide explores how ChatGPT pricing works and how to maximize value from your AI interactions.

How AI Pricing Models Work

ChatGPT and similar AI models charge based on token usage rather than word count. Tokens represent chunks of text – approximately 0.75 words per token for English text. This means a 100-word prompt consumes about 133 tokens.

Pricing varies significantly between models:

The most cost-effective solution for everyday tasks at $0.002 per 1K tokens.

Premium model with enhanced capabilities priced at $0.06 per 1K tokens.

Currently free, with potential future pricing around $0.0005 per 1K tokens.

Mid-range option at $0.011 per 1K tokens, known for long-context handling.

7 Strategies to Reduce AI Costs

Implement these techniques to significantly decrease your ChatGPT expenses without sacrificing quality:

1. Streamline your prompts: Remove unnecessary pleasantries and context. AI doesn’t need “Hello” or “Thank you” – get straight to the request.

2. Use follow-up messages: Break complex requests into multiple messages instead of creating one massive prompt. This leverages context more efficiently.

3. Set response limits: Specify your desired output length (“Respond in 3 sentences” or “Limit to 200 words”).

4. Choose the right model: Reserve GPT-4 for tasks that truly require its advanced capabilities. Most content creation works well with GPT-3.5.

5. Use templates: Create standardized prompt frameworks that work across multiple requests.

6. Monitor usage: Regularly review your token consumption patterns to identify optimization opportunities.

7. Leverage batch processing: Group similar requests together to minimize context-switching overhead.

When to Invest in Higher-Cost Models

While cost-saving is important, some scenarios justify premium models:

Creative endeavors: GPT-4 produces more nuanced and creative content for marketing copy, storytelling, and conceptual work.

Technical tasks: Complex code generation, data analysis, and technical writing benefit from GPT-4’s enhanced reasoning.

Precision-critical applications: When accuracy is paramount (medical information, legal documents), the investment in GPT-4 pays dividends.

The Future of AI Pricing

As competition intensifies and technology advances, we expect to see:

• Gradual price reductions across all models

• More tiered pricing options for different needs

• Bundled services with complementary AI tools

• Enterprise plans with volume-based discounts

• Specialized models optimized for specific industries

By understanding the factors that influence ChatGPT costs and implementing smart optimization strategies, you can harness the power of AI while maintaining control over your budget. Regularly revisit your approach as new models and pricing structures emerge in this rapidly evolving landscape.

- What is the ChatGPT prompt word cost estimator?

It’s a tool that calculates how much your prompt might cost based on word count and selected GPT model. - How does the prompt cost estimator work?

It converts your word count into token estimates and applies OpenAI’s pricing to give cost predictions. - Does this estimator support GPT-3.5 and GPT-4?

Yes, it supports all major OpenAI models including GPT-3.5, GPT-4, and GPT-4 Turbo. - What is a token in ChatGPT pricing?

A token is a unit of text. On average, 1 token = ~0.75 words in English. - How do I calculate the cost of a ChatGPT API call?

Use this estimator by inputting word count and selecting the model; it outputs estimated cost. - Is this estimator accurate for OpenAI pricing?

It uses the latest OpenAI token rates, but your actual usage may vary slightly due to formatting or responses. - What is the average word-to-token ratio?

Typically, 100 words are about 130–150 tokens depending on language and structure. - Can I estimate both prompt and completion cost?

Yes, you can input prompt and expected output word counts separately. - Is this cost estimation tool free?

Yes, this tool is completely free to use and doesn’t require login. - Does the estimator include context tokens?

Yes, you can factor in system prompts or previous conversation tokens if needed. - What are OpenAI’s token pricing rates?

Prices vary by model. GPT-3.5 is cheaper than GPT-4, and GPT-4o offers cost-effective performance. - Can I use this for billing prediction?

Yes, it helps predict API costs before sending prompts to OpenAI. - Why is token count important in ChatGPT?

OpenAI charges based on token usage, not word count, so estimating tokens is crucial. - What’s the difference between input and output tokens?

Input tokens = your prompt; Output tokens = AI’s reply. Both are billable. - Can I paste a full prompt to calculate tokens?

Advanced tools may allow pasting full prompts for exact token analysis. - How do I reduce token usage?

Be concise in your prompts, avoid redundancy, and minimize system messages. - Can I estimate cost for multi-turn conversations?

Yes, but you must add up all prompt + reply tokens from each exchange. - Is this estimator good for budgeting AI usage?

Absolutely. It’s ideal for developers and content creators managing usage limits. - Does this work for ChatGPT Plus plans?

It’s primarily for API-based pricing but can help estimate value for ChatGPT users. - How often is the estimator updated?

It’s updated regularly to reflect OpenAI’s latest token prices and model options.

Leave a Reply